Fashion and interior designing are two industries where the visual appeal of the products used or put on the matter a lot. It won’t be erroneous to call fashion and interior designing all about a game of appearance and looks. To meet the client’s expectations and utility of a design, fashion and interior designers have to put in tons of effort and deal with a fair share of struggles. At times, vague or incomplete inputs increase the burden of interior and fashion designers.

The advent of AI and computer vision has made the job of fashion and interior designers a lot easier as these two technologies allow professionals to suggest customized yet appealing product recommendations.

Also Read: Style-aware Product Recommender Engine and How Can it Help Interior Designers?

Additionally, the use of multi-model search engines is on the rise. This inventive technology enables interior and fashion designers to bring visual and text-based customer queries to a centralized place and make an accurate and most-fitting product recommendation.

If that sounds unfamiliar to you and as a fashion or interior designer you want to know more about this advanced technology then this blog is the best bet as it features key inputs about it.

The Existing Problem

The fashion and interior designing industry are already bequeathed various tools that assist professionals to figure out visually appealing and suggest a similar products. However, they lack to match the style and context of the product with the proposed design plan. They only suggest products based on the object’s appearance.

The use of the latest textual representation tool, word2vec, is helpful only when contextual details are represented as the training corpus. It’s beneficial only to spot the products having the same or corresponding styles such as chair-table, pant-shirt, and shoe-socks.

This made textual search limited and restrictive, especially in the case of interior designing as it fails to describe the various styles in detail. It won’t be able to produce accurate product recommendations if one mentions the Scandinavian style or rustic style.

Multi-Model Search Engine – Making Product Recommendations Effortless

All the above pre-existing product-recommendation-related issues were resolved to a great extent by bringing the best of visual and textual search methods together. The end result was an advanced multi-model search engine, Style Search Engine, which features an extensive list of products that are visually the same and have detailed textual inputs from the users.

This multi-model search engine’s operations are possible via YOLO 9000, a high-end art object algorithm, and a deep neural network. These two technologies are used by the visual search block of this engine. The updated textual block of this Style Search Engine endows end-users to make the search criteria more specific in terms of text explanation while augmenting the contextual significance of retrieved results.

Additionally, to promote the product suggestions utility, the search engine merges the visual and textual search results by utilizing the comparative score in the feature spaces.

With all these means, the engine manages to enhance the stylistic and aesthetic resemblance of the retrieved products. When analyzed thoroughly, it was figured out the use of this tool has managed to enhance the product recommendation performance by 11%.

How Multi-model Search Engine Works?

In this section, we will contribute comprehensive excerpts of the modus operandi of multi-model search engines. For input, the engine accepts queries in the form of images and text.

The image query here represents the image of an interior. For instance, the living room image or a bedroom image. As a text query, specific terms are mentioned. For instance, cozy, modern, aesthetic, or fluffy.

Once the inputs are received, an object-detection algorithm is brought into action to detect the objects featured in the uploaded image. The algorithm identifies the class of the object and defines it in detail like its chair, table, or sofa.

Upon identifying the exact object class, the interest region details are fetched in the form of picture patches and then this detail is forwarded to the visual search method. The same course of action is adopted to process the textual query.

The algorithm deduces the visual and textual matches and the blending algorithm of search engine categories them based upon the level of similarity shared with the described features and spaces.

Source: Wikipedia

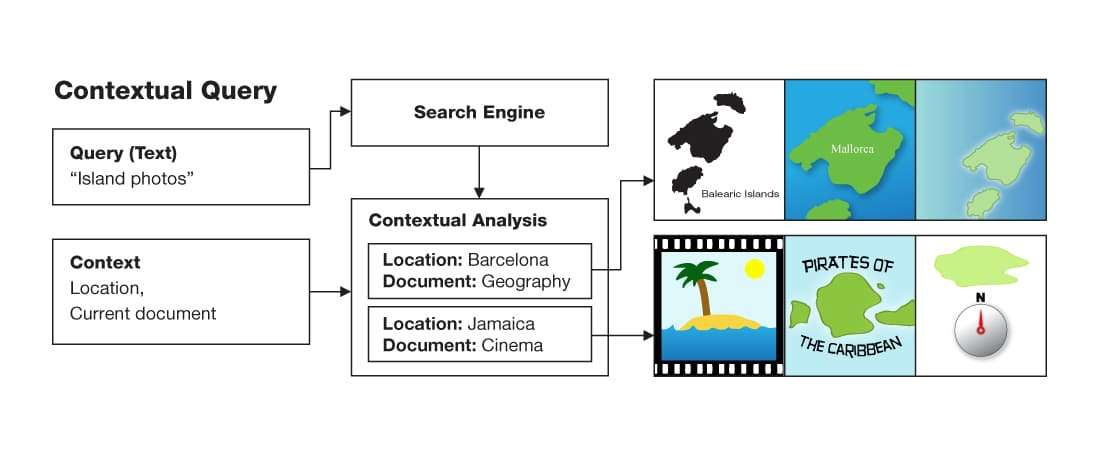

This image displays the general modus operandi of multi-model search engines.

The visual search engine feature of this engine doesn’t use the whole image of the interior space or fashion apparel. The object detection algorithm of this tool acts as a pre-processing step. With this approach, the engine retrieves results with more accuracy and precision as not the entire space or class is analyzed.

This approach is more advanced than the customary visual search engines that feature object categories having human labeling. The object detection method, YOLO 9000, is administered by DarkNet-19 mode and features 19 convolutional layers. Also, there are 5 max-pooling levels. With such advanced technology, the object detection method of the multi-model engine is able to identify the class of different furniture classes with bounding boxes.

The bounding boxes are further used to create Regions of Interest (ROIs), presented in the pictures. Visual search is executed on these extracted ROIs. For providing more optimized search results, the multi-model search engine uses the outputs from an entirely connected layer of neural networks and normalizes the outputs of extracted vectors.

When it comes to the textual search query, the multi-model search engine takes the help of text query search to make the search result more specific.

The textual search query feature of this search engine is so advanced that it figures out the accurate details even when interior items representing abstract ideas can also be processed.

For instance, minimalism or the Scandinavian style has no specific characteristics to define properly. Yet, the advanced textual search query mechanism of the multi-model search engine will bring accurate results.

The accurate space-embedding feature of the textual query is achieved using the state-of-the-art Continuous Bag-of-Words (CBOW) model from the word2vec model family. The multiple description details related to common household parts and fashion designs like rooms, kitchens, clothing style, and length are referred to clearly.

What makes the space-embedding technology of this multi-model search engine is its ability to operate without asking for any linguistic knowledge. Only the objects and information that appeared in the room at the time of query processing are captured.

Conditions to Fulfill For Sure While Using A Multi-model Search Engine

While using a multi-model search engine is a great way to improve the accuracy of product recommendations for fashion and interior designers, one must fulfill certain criteria to ensure its proper functioning. These conditions are:

- The inputs provided should include images of individual objects and room scene images matched with the presented objects.

- The details regarding the objects represented within a given design scenario should be clear and precise.

- The textual description for every room image should be offered.

The absence of all the above criteria will lead to the improper functioning of search engines. Hence, make sure they all are fulfilled without fail.

Utility of Multi-model Search Engine for Fashion and Interior Designers

The above text has made it clear that the cutting-edge multi-model search engine is a sure-shot way to get accurate and precise recommendation search results Fashion and interior designers can deploy this tool for performance improvement, better ROI, and optimized resource utilization.

Here are certain benefits that using a multi-model search engine sews instantly:

- Fashion and interior designers are able to make accurate results even with few inputs. Not many details are required for the results retrieving process.

- As everything is automated, tons of effort and a huge amount of processing time is saved. While product recommendations are happening, designers can concentrate on other crucial operations.

- The high-end multi-model search engines are enabled with an innovative web-based application allowing end-users to use a pre-load image or upload an image of their choice. This makes product recommendations more accurate.

- When a client is having a vague idea of end results, it’s a tough task for designers to bring the best match. The use of a multi-model search engine makes immediate and accurate product recommendations even with slight inputs.

- The processing of virtual and textual queries makes results precise that allowing designers to provide customization at their best and offer solutions deliver just as projected by the customers.

Ending Notes

It takes a lot of hard work to bring the idea of a customer related to a dream space or dress to life and interior designers and fashion designers need a helping hand that can ease down the entire process. The use of a multi-model search engine is a great way to eliminate the tediousness involved and make near-perfection recommendations.

The advanced technology is here to stay as it has recorded an instant 11% improvement in performance and project delivery. The integration of web-based applications makes image processing easy. Fashion and interior designers seeking a way to improve their product recommendations must give this tool a try to experience unmatched performance enhancement.